KIVI

A Tuning-Free Asymmetric 2-bit Quantization for KV Cache

KIVI

A Tuning-Free Asymmetric 2-bit Quantization for KV Cache

1University of Minnesota 2Rice University 3Texas A&M 4Stevens 5Johns Hopkins 6CMU

ICML 2024 · *equal contribution

TL;DR

The KV cache is the memory bottleneck of long-context LLM serving, and the obvious fix — quantize it — is harder than it looks at 2 bits. We study the element distribution of KV tensors in Llama, Falcon, and Mistral and find a clean, model-independent asymmetry: keys have a few fixed outlier channels and should be quantized along the channel axis, while values show no such structure and should be quantized per token. KIVI applies this rule with a hardware-friendly kernel and needs no calibration or fine-tuning. The result is up to 2.6× peak memory reduction, up to 4× larger batch size, and 2.35–3.47× end-to-end throughput at essentially the same quality.

Abstract

Efficiently serving large language models requires batching many requests to reduce per-request cost. But as batch size and context length grow, the key-value (KV) cache — which stores attention keys and values to avoid recomputation — dominates memory and speed. Quantization is the natural response, yet there has been little study of the element distribution of the KV cache itself. We conduct a systematic study and find that the key cache should be quantized per-channel (group along the channel dimension) while the value cache should be quantized per-token. From this we design KIVI, a tuning-free 2-bit KV cache quantization algorithm with a hardware-friendly implementation. KIVI lets Llama, Falcon, and Mistral keep essentially the same quality while using 2.6× less peak memory (weights included), enabling up to 4× larger batch sizes and 2.35–3.47× higher throughput on real inference workloads.

The observation: keys and values are not the same

Quantization has two knobs that matter: the bit-width and the group over which the scale and zero-point are shared. The folklore prescription has been to quantize everything per-token — i.e., share the scale across the hidden dimension, token by token. That works fine at 4 bits but collapses at 2 bits.

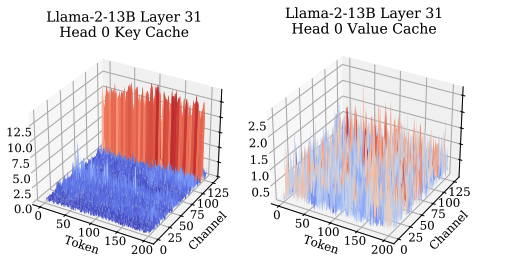

When we plotted the magnitude distribution of the KV tensors across many layers and many models, the reason jumped out. The key cache has a handful of channels with dramatically larger magnitudes than the rest — the classic activation-outlier pattern — and those outlier channels are the same ones at every token. A per-token scale has to stretch to fit the outliers, wasting all of its dynamic range on the small-magnitude channels. A per-channel scale gives each outlier channel its own budget.

The value cache looks completely different. There is no persistent outlier structure along the channel axis, but there is meaningful variation between tokens. So values should be quantized the ordinary way: per token.

The algorithm

KIVI applies each rule where it helps. For the key cache we group along the channel dimension and quantize to 2 bits with a shared scale and zero-point per group; for the value cache we quantize per token, also at 2 bits. Everything is asymmetric (scale + zero-point) so that nonnegative activations don't waste a sign bit. There is no calibration set, no fine-tuning, and no knob to tune per model — hence "tuning-free."

There is one system wrinkle. Per-channel quantization of keys conflicts with streaming decode: each new token writes a new row, but the quantization group spans all existing rows. We handle this by keeping a small floating-point residual buffer of the most recent tokens and quantizing in chunks, so the amortized memory still approaches the 2-bit target while the newest tokens stay in full precision until the group is full.

Results

Quality: on Llama-2, Falcon, and Mistral across MMLU-style and long-context benchmarks, KIVI at 2 bits is within noise of full-precision KV. On generation tasks the accuracy deltas we saw were typically under one point.

System: shrinking the cache by 8× in bits (16 bits → 2 bits) means the serving-time budget is freed for more requests. In practice we see up to 2.6× lower peak memory (including model weights), 4× larger batch size at the same context length, and 2.35–3.47× end-to-end throughput on long-context workloads.

Impact

The per-channel-keys / per-token-values split turned out to be a durable observation — KIVI’s finding inspired the QuantizedCache in Hugging Face Transformers and has been replicated in a range of follow-up KV compression work. In a later ICML 2025 paper we traced the asymmetry itself to RoPE: rotary position encoding interacts with the key projection in a way that concentrates magnitude into a small, fixed set of channels, explaining why the keys — and only the keys — have persistent outliers.

Citation

@inproceedings{liu2024kivi,

title = {{KIVI}: A Tuning-Free Asymmetric 2-bit Quantization for {KV} Cache},

author = {Liu, Zirui and Yuan, Jiayi and Jin, Hongye and Zhong, Shaochen

and Xu, Zhaozhuo and Braverman, Vladimir and Chen, Beidi and Hu, Xia},

booktitle = {International Conference on Machine Learning (ICML)},

year = {2024}

}