Projects

Chef's Selections

Adopted in HuggingFace Transformers (QuantizedCache)

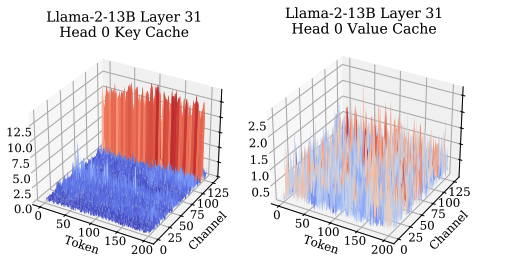

KIVI: A Tuning-Free Asymmetric 2-bit Quantization for KV Cache

The KV cache has an asymmetric structure: keys have a few fixed outlier channels, values don't. We quantize keys per-channel and values per-token at 2 bits, no tuning required. Result: 2.6× peak memory reduction and 2.35–3.47× end-to-end throughput with essentially no quality loss.

project page · Paper · Code

Integrated into Llama.cpp · Featured at Google I/O

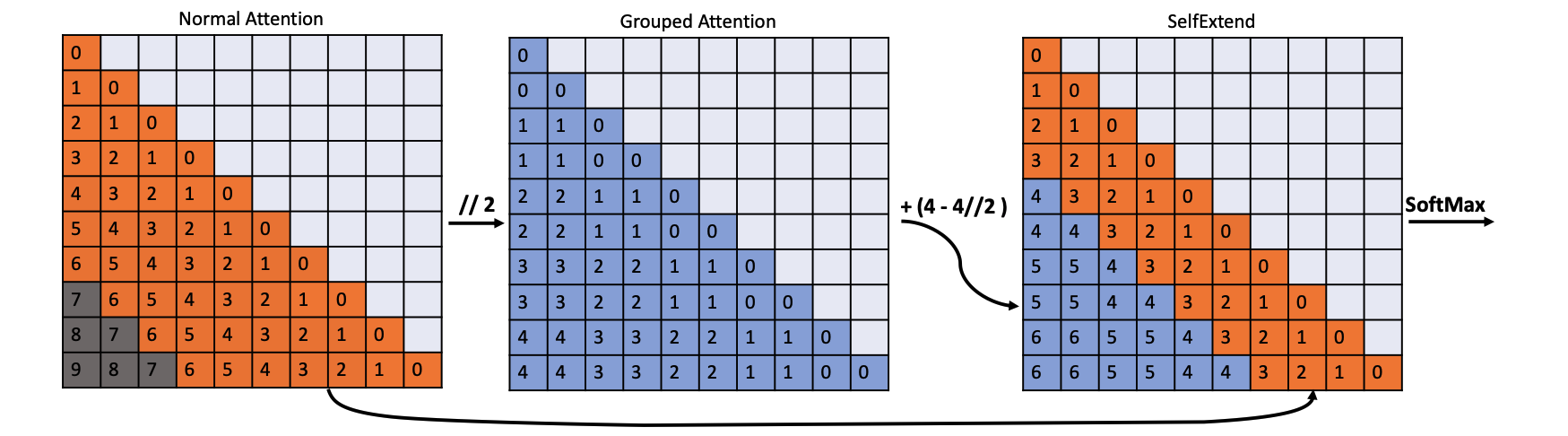

LLM Maybe LongLM: Self-Extend LLM Context Window Without Tuning

LLMs break on long contexts because they see position IDs they were never trained on. Self-Extend fixes it at inference time: keep fine-grained positions for nearby tokens, and floor-divide positions of distant tokens so they fall back into the training range. No fine-tuning required, and Llama / Mistral extend their effective context window by 4–8× with minimal quality loss.

Paper · Code · Llama.cpp PR · Google I/O

NeurIPS 2025 Oral · Acknowledged in Thinking Machines Lab's blog

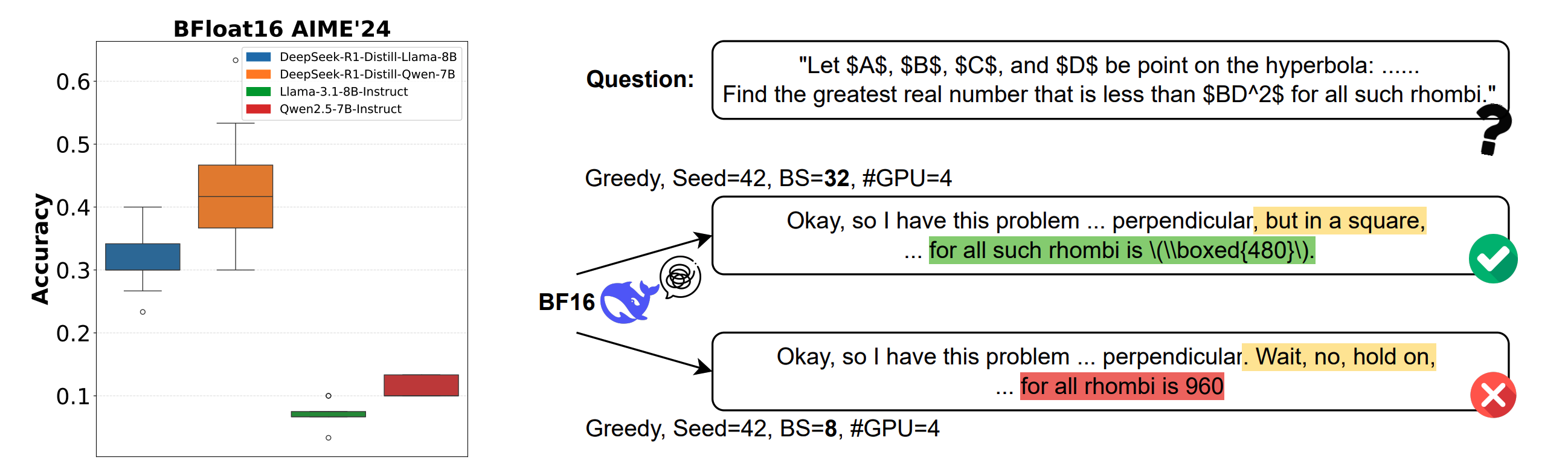

Understanding and Mitigating Numerical Sources of Nondeterminism in LLM Inference

The same prompt under BF16 greedy decoding gives different answers on different GPUs — up to 9% accuracy and 9,000 tokens of length for reasoning models. The cause is non-associative floating-point arithmetic: reduction orders shift with batch size, GPU count, and GPU type. Rounding differences cascade through long chains of thought.

project page · Paper · Code · Talk · Thinking Machines Lab blog

Upstreaming to SGLang (PR under review, to be merged)

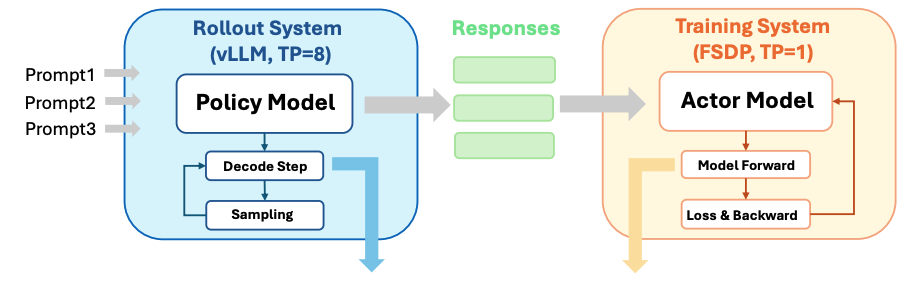

Deterministic Inference across Tensor Parallel Sizes That Eliminates Training-Inference Mismatch

In RL post-training, the rollout engine (vLLM, TP=8) and the trainer (FSDP, TP=1) disagree on per-token probabilities because their reduction trees differ. Tree-Based Invariant Kernels align intra- and inter-GPU reductions into a single tree, giving bit-wise identity across TP sizes and eliminating the silent off-policy drift.